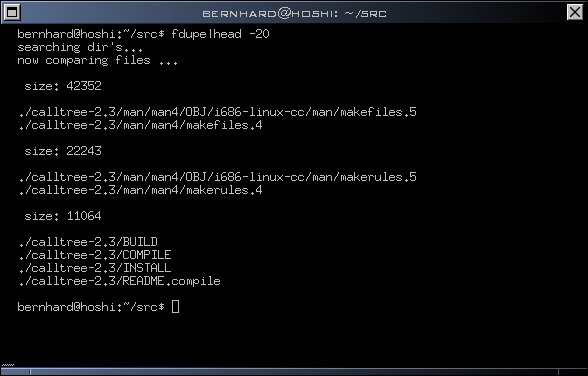

fdupes

identifies duplicate files within given directories

https://github.com/adrianlopezroche/fdupes16

10 reviews

Latest reviews

Very helpful and speedy. Using two options --summary --recurse will tell you if current sub-directories have some or many duplicates (so you can use the --delete --noprompt --recurse options to auto-delete the dups), or for manual control, use --delete --recurse alone, and you'll be shown all paths containing the currently-duplicated file and you are allowed to keep 1 or more, or all duplicates of that file.

Very handy. Works exactly as expected and had no problems with it. Nice and fast too.

Works great. For a description of different options go to https://gtihub.com/adrianlopezroche/fdupes

I needed to find duplicate pictures in a number of subdirectories. Simple and works perfectly.

Thanks @ravenheart for the example. This program works excellent. However i have over 1300 sets. This is going to take a minute. However, the program does do some neat things. For example: it will find files of the same kind, differnent name structure, different directory structure. Thats whats wo0t! It will also find differnent name files, same directory, same file. Pretty neat to say the least. 5 Stars, respects and thank you's to the programer(s). Here is the --help of the file: http://imageshack.com/a/img661/9648/jl4otM.png

Works great. As an example, to check your Downloads folder along with all of its subfolders, enter this in terminal: fdupes -r -d /home/username/Downloads After it scans, you'll be prompted which file of each duplicate set that you want to keep.